CloneSpy displays just enough options by default, it's also free and can be run in "portable" mode without being fully installed, which we tend to like for this kind of utilities.įor a more intuitive interface with simpler functionality than the above, we like Wise Duplicate Finder: We've installed all of the above and unless you're after some specific feature, CloneSpy gets our recommendation for having a light, albeit somewhat cluttered interface. Duplicate Cleaner Pro/Free (15 day trial).You're probably going to need one of these tools. Third party tools to find duplicate files Note however we're not as fond of CCleaner as we used to be and there are better alternatives these days. If you'd rather not add any additional third party software to your system or learn your way around a new file explorer, it's worth mentioning that CCleaner has a duplicate file finder built in (Tools > Duplicate Finder), if you happen to use that already. Those of you using the powerful File Explorer alternative Total Commander may know already that it includes the ability to search for duplicate files (it's on the second search page) among the dozens of other features it provides over the Windows File Explorer aimed at power users. This isn't helpful, of course, if you don't know which files have duplicates. Without installing third party software, your only option is running a search for a specific file via Windows Explorer and manually deleting the duplicates that appear. While there are many options for accomplishing this sort of task with batch files or PowerShell scripts, we assume most people would prefer something that doesn't involve a command prompt. Windows doesn't make it easy to deal with duplicate files all by itself. Deleting duplicate files on your system could easily result in clean out that is similarly sizable if only a few large files are found. Thanks to Kenward Bradley’s one-liner which sparks the idea in me to write this script.We've covered many ways that you can save space on your storage drives over the years, most recently discussing how to manually go through large files and testing cleanup utilities, resetting Windows to its default state without losing your files, and methods for deleting the Windows.old backup, in all scenarios potentially reclaiming several gigabytes of storage in the process. Selected files moved to C:\Duplicates_$date" Destination $env:SystemDrive\Duplicates_$date -Force Path $env:SystemDrive\Duplicates_$date -Force Selected files will be moved to C:\Duplicates_$date" ` "Select files (CTRL for multiple) and press OK. $d.Group | Select-Object -Property Path, Hash $duplicates = Get-ChildItem $filepath -File -Recurse ` $filepath = Read-Host 'Enter file path for searching duplicate files (e.g. # Find Duplicate Files based on Hash Value # # Author: Patrick Gruenauer | Microsoft MVP on PowerShell # Selected files will be moved to new folder C:\Duplicates_Date for further review. # find_ducplicate_files.ps1 finds duplicate files based on hash values. Copy the code to your local computer and open it in PowerShell ISE, Visual Studio Code or an editor of your choice. find_duplicate_files.ps1Īnd here is the code in full length. You will again see a new window appearing that shows the moved files for further review Afterwards the duplicate files are moved to the new location.All selected files will be moved to C:\DuplicatesCurrentDate A window will pop-up to select duplicate files based on the hash value.This folder is our target for searching for duplicate files Enter the full path to the destination folder. Make sure your computer runs Windows PowerShell 5.1 or PowerShell 7. With my script in hand you are able to perform the described scenario.

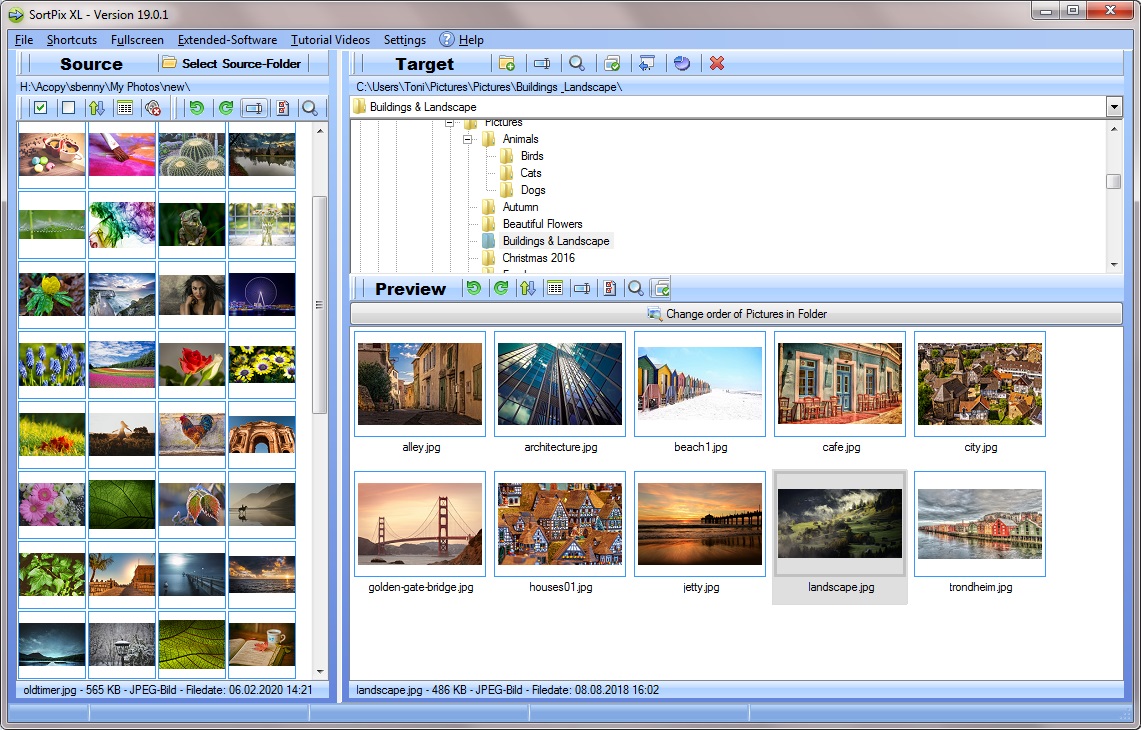

We will search duplicate files and then move them to a different storage location for further review. I want to change that with you in this blog post.

Files are accidentally or deliberately moved from location to location without first considering that these duplicate files consumes more and more storage space. Big data usually means a huge number of files such as photos and videos and finally a huge amount of storage space.

We are living in a big data world which is both a blessing and a curse.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed